Search has never really been static. It just looked that way from the outside. Behind the scenes, it kept evolving, from simple indexing to complex ranking systems, and now toward something less visible but far more influential. The shift isn’t just about better results anymore. It’s about how those results are actually formed.

What’s changing now feels different. Instead of search engines simply pointing users to pages, they’re starting to interpret, summarise, and respond. That transition introduces a new layer beneath the familiar surface, one where websites are no longer just crawled but increasingly understood in context.

This is where Google Webmcp begins to enter the conversation. Not as a replacement for existing systems, but as a signal of where things are heading.

What is Google Webmcp?

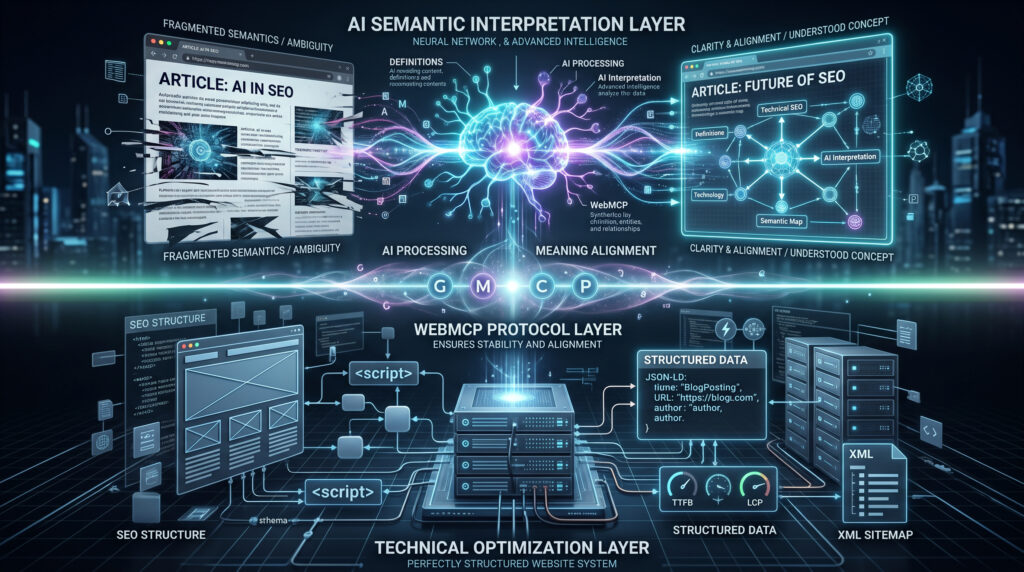

At a basic level, Google WebMCP is about helping machines interact with websites in a more direct and reliable way. Instead of depending only on crawling pages and interpreting raw structure, it introduces a clearer path for systems to understand what a website is actually trying to say.

A lot of confusion around what Google Webmaster Central comes from expecting it to behave like a regular SEO update. It’s not just another tweak to search engine algorithms or an added layer to website indexing. It changes how information moves between websites and the systems reading them.

Earlier protocols were built for discovery. Bots would find pages, follow links, and store content. Understanding came later, and not always accurately. With Google Webmcp, the focus shifts toward interpretation from the start. The idea is simple: reduce the need for guessing.

It’s easier to think of it as a move from reading pages to understanding signals. That shift may seem small, but it changes how visibility works. When meaning is clearer, systems don’t have to rely on patterns alone.

How Google Webmcp Works

Looking at how WebMCP works through the lens of traditional crawling doesn’t quite explain it. This isn’t just a faster version of the same process.

With Google WebMCP, systems rely less on piecing things together and more on receiving structured intent. Earlier, meaning had to be inferred from scattered elements. Now, the aim is to make that meaning more direct.

This affects how outputs are generated. Modern systems don’t just store content; they respond. If the input is unclear, the response reflects that. When signals are stable, responses become more consistent.

In that sense, it isn’t replacing the web. It’s reducing how much interpretation is needed to make sense of it.

Why WebMCP Matters for Modern SEO

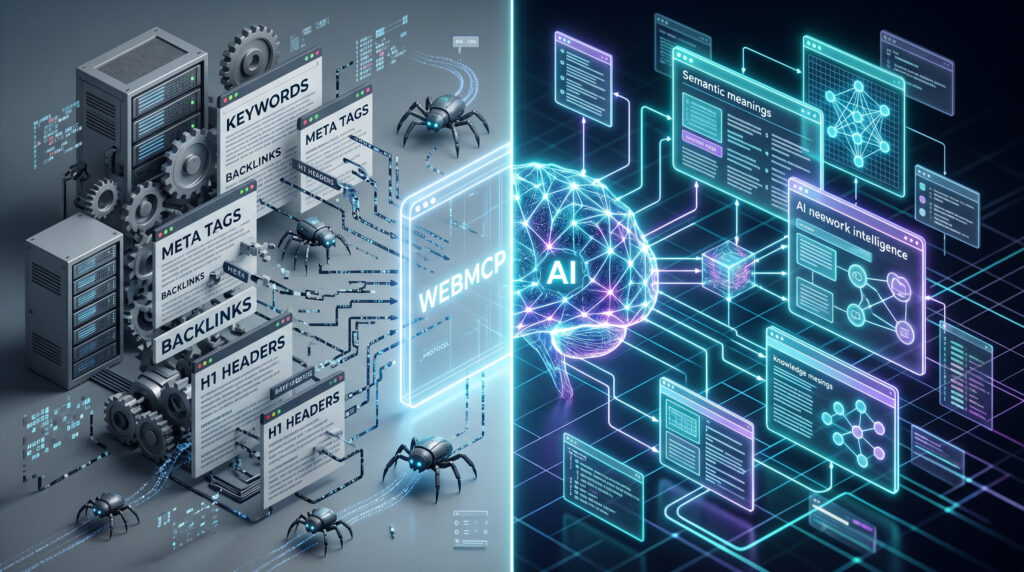

For years, SEO has quietly adapted to whatever search engines needed. First it was keywords, then links, then structure, then intent. Each phase added a layer, but the underlying process stayed familiar. Pages were created, crawled, indexed, and ranked. That cycle defined how visibility worked.

What’s shifting now is not just another layer. It’s the expectation behind it.

With systems moving toward interpretation rather than simple retrieval, the role of technical seo starts to stretch. It’s no longer just about making a site accessible or indexable. It’s about making it understandable in a way that doesn’t leave room for misinterpretation. That’s a different kind of discipline.

This is where something like Google WebMCP starts to matter more than it initially appears. It signals a move toward clarity over inference. Instead of hoping systems connect the dots correctly, the emphasis shifts to presenting those connections in a way that doesn’t need decoding.

The interesting part is that this doesn’t replace older practices. Structure, speed, indexing, all of that still matters. But it’s no longer enough on its own. A technically sound site that still feels ambiguous at a conceptual level may struggle more than expected.

Modern SEO is starting to reward certainty. Not just whether your pages exist, but whether what they mean is consistently understood.

Role of AI Agents in Search Engines

Search engines aren’t just engines anymore. That shift has been gradual, almost easy to miss, but it’s real. What used to be a system that retrieved pages is now something that interprets, filters, and increasingly acts on behalf of the user. That’s where AI agents come in.

These agents don’t behave like traditional crawlers. They don’t just follow links and store content. Instead, they navigate information with a goal in mind. Answer a question. Compare options. Recommend something that fits. In doing that, they rely less on raw pages and more on structured understanding.

This is where the connection to Google WebMCP becomes clearer. If agents are expected to interpret and respond, they need inputs that are more precise than what traditional crawling provides. Ambiguous content slows them down. Clear signals speed them up.

That shift may not be obvious on the surface yet, but it’s already shaping how information flows underneath.

How AI Agents Crawl and Understand Websites

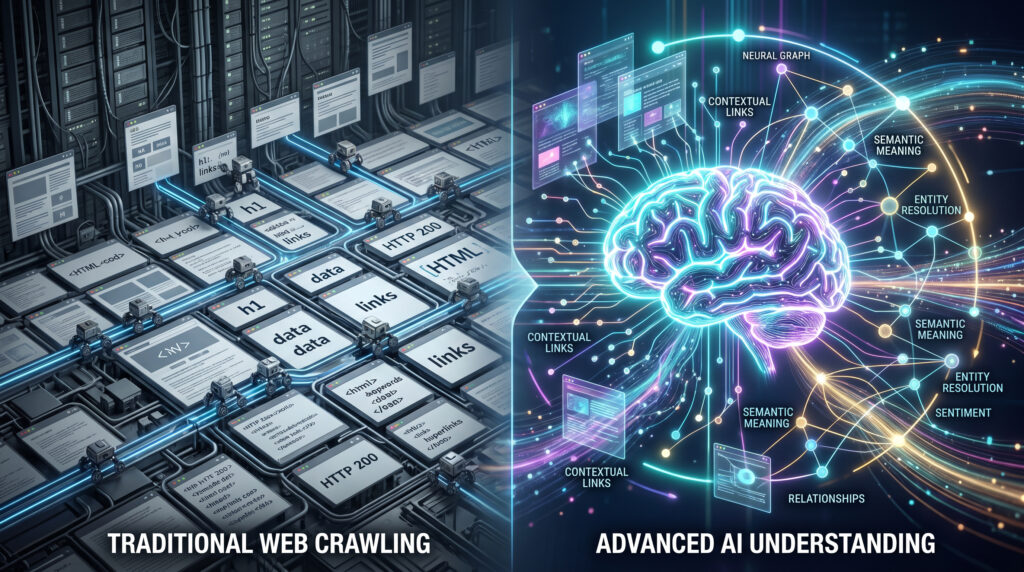

If you go back to what search engine crawling is in the traditional sense, it was pretty straightforward. Bots showed up, moved from link to link, grabbed whatever was on the page, and stored it. That was the job. It didn’t really “get” anything. It has just been collected.

For a long time, that was enough. But it’s starting to show its limits.

Now, with smarter systems in play, search engine crawling isn’t just about gathering pages anymore. It’s about figuring out what those pages are actually saying. Not in isolation, but in relation to everything else out there. One page connects to another. Ideas overlap. Context starts to matter more than raw content.

It’s less about scanning for keywords and more about picking up signals that make something easier to understand. Does the explanation hold together? Does it say the same thing throughout? Does it line up with how others describe the same topic?

Crawling hasn’t disappeared. It’s still there, doing its job. But the expectation around it has changed. Just collecting information doesn’t carry the same weight anymore. What matters now is whether that information can be understood without second-guessing it.

That change is subtle, but it’s already influencing how websites are interpreted behind the scenes.

WebMCP vs Traditional Crawling Methods

If you want to see where Google Webmcp fits in, it helps to step back and look at how things used to work. Traditional crawling was pretty linear. Bots would find pages, move through links, collect data, and only then try to figure out what it all meant. It did the job, especially when the web was smaller and simpler.

But that approach has always had a bit of friction built into it.

Most of the understanding happened after the collection phase. Systems gathered signals first and tried to connect the dots later. Sometimes those dots lined up. Sometimes they didn’t. A certain level of guesswork was always part of the process.

With Webmcp of Google, the shift isn’t dramatic, but it’s noticeable. Instead of relying so heavily on interpretation after the fact, there’s more emphasis on making things clearer from the beginning. The idea isn’t to get rid of crawling. It’s to reduce how much decoding needs to happen once the crawl is done.

If you look closely, the contrast starts to show up in small but important ways:

- Traditional crawling focuses on discovery; WebMCP leans toward structured understanding

- Older methods depend on signals like links and keywords; WebMCP emphasizes intent

- Crawlers collect and index; newer systems aim to interpret as they go

- Ambiguity is tolerated in older models; newer approaches try to minimise it

What this creates is not a sudden replacement, but a gradual shift. Both systems will likely exist together for a while. But over time, the balance moves toward methods that reduce uncertainty and improve clarity.

That’s where Google Webmcp starts to feel less like an experiment and more like a natural progression.

Impact of WebMCP on Technical SEO

The impact of Google WebMCP doesn’t show up as a single change. It creeps in through expectations. What used to be considered “good enough” from a structural standpoint starts to feel incomplete when systems are trying to interpret meaning, not just access content.

This is where things get uncomfortable for traditional setups.

Technical work has always focused on making websites readable for machines. Clean code, proper indexing, structured data, all of that still matters. But now there’s an added layer. It’s not just about whether a system can read your site. It’s about whether it can understand it without hesitation.

A few shifts start to become noticeable:

- Clean structure still matters, but clarity of meaning matters more

- Markup helps, but a consistent explanation across pages carries more weight

- Fast and accessible sites are expected, not differentiators anymore

- Fragmented messaging weakens interpretation, even if the site is technically sound

What this leads to is a broader definition of what technical SEO actually includes. It starts overlapping with content and strategy in ways that weren’t as obvious before.

The presence of WebMCP doesn’t make older practices irrelevant. It just raises the bar. Instead of optimizing for access alone, websites now need to optimize for accurate interpretation as well. That’s a different kind of precision, and it changes how technical decisions are made.

How to Optimize Your Website for AI Agents

Optimizing for systems that interpret rather than just crawl requires a shift in mindset. You’re no longer preparing pages to be found. You are preparing them to be understood without confusion.

With something like Google WebMCP shaping expectations, the focus moves away from isolated fixes and toward overall clarity. A technically perfect page that says three slightly different things across sections can still create friction. What matters more is whether the meaning holds steady from start to finish.

There are a few practical directions that start to matter more:

- Keep explanations consistent across pages, not just within one page

- Avoid unnecessary variation in how core ideas are described

- Structure content so the intent is obvious without relying on context

- Reduce ambiguity instead of adding more information

This is where the idea of website optimization begins to overlap with communication, not just performance. It’s less about tweaking elements and more about aligning how the entire site expresses what it does.

In that sense, optimizing for Google Webmaster Central isn’t about adding something new. It’s about removing the friction that made understanding harder in the first place.

Future of SEO with AI and Web Protocols

Predicting the future of seo has never been straightforward. Changes don’t arrive loudly. They settle in, piece by piece, until suddenly they feel normal. That’s what’s happening now.

As systems move from listing results to actually responding, web protocols start to matter more. They help translate what a site says into something machines can reliably understand. Without that, too much depends on guesswork.

This doesn’t replace traditional SEO, but it just stretches it. Rankings still matter, but they are no longer the whole game. What is starting to count more is consistency. When your message stays stable across the web, systems begin to trust it and reuse it.

What This Means Going Forward

At a glance, nothing seems dramatically different. Websites still exist. Pages are still indexed. Results still appear. But underneath that surface, the way visibility is earned is shifting in a direction that’s harder to measure and easier to overlook.

The introduction of something like Webmcp points to a simple idea. Systems are moving toward understanding, not just retrieval. And once that becomes the default expectation, the way websites communicate starts to matter more than how they’re structured alone.

What this means in practice is that waiting doesn’t keep things stable. It creates a gap. While some brands adapt their content and structure to be more interpretable, others continue optimizing for systems that are gradually changing.

This isn’t about reacting to a trend. It’s about recognizing a direction early enough to adjust without friction. Because once interpretation becomes the baseline, catching up is always harder than adapting as things shift.

That’s the space where teams like Being Digitalz are starting to focus, looking beyond traditional optimization and toward how websites are actually understood by modern systems.